Social psychology is studying itself, and this time it’s doing it right

-

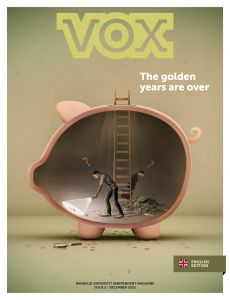

Foto ter illustratie. Door Dick van Aalst

Foto ter illustratie. Door Dick van Aalst

Social psychology has been in the doghouse since Diederik Stapel (whose research results turned out to be largely unfounded). In an effort to turn the tide, scientists led by Radboud researcher Fred Hasselman, to name but one, carried out a mega-study in which they repeated dozens of experiments. With varying results.

People who experience feelings of disgust more often than others are also more often homophobic. The more siblings you have, the more sociable you are. These are just two random examples of classic findings in social psychology. Except – they’re not true. This is revealed in a large-scale study that will appear soon in the professional journal Advances in Methods and Practices in Psychological Science. The results can already be seen online on preprint server PsyArXiv.

Known as the Many Labs 2 Study and led by Brian Nosek of the Center for Open Science in Charlottesville (US), the study stems from the ‘replication crisis’ in social psychology. At the beginning of this decade, it was discovered that research results often could not be confirmed when psychologists from other labs repeated experiments. The question arose as to how reliable social psychology actually is as an area of science.

The crisis deepened in 2011 with the fall of the Tilburg professor, Diederik Stapel, who it turned out had simply made up the data of dozens of experiments. As a reaction, several psychologists decided to take a very close look at their professional field, in an attempt to restore faith in it. On the initiative of Brian Nosek, in 2015, no fewer than one hundred studies were repeated once more. This resulted in the original findings being invalidated in more than half the cases.

Fictitious cities

Many Labs 2 is a follow-up study. Of the 28 claims studied by the researchers this time, half turned out to be untenable or even to have an opposite connection, according to Nijmegen psychologist Fred Hasselman. The assistant professor in orthopedagogics and educational sciences, and fellow of the Behavioural Science Institute, was one of the four head researchers of Many Labs 2.

Hasselman gives one example: there was a study into where test persons think the richest people live in a fictitious city. ‘That was said to be culturally determined: test persons from the US pointed to fictitious cities in the north on a map, and participants in Hong Kong pointed to those in the south.’ The repeat study showed that there was, in fact, no difference.

‘What on earth was the old guard doing all that time?’

The fact that many original studies turn out to be incorrect does not immediately mean that the research leaders were committing fraud, Hasselman emphasises. ‘The experiments were often carried out with very few test persons: ten, twenty at the most. The risk of finding a certain connection by coincidence is greater in that case.’ He also knows that researchers in those days were a bit careless with statistics. ‘For example, they would keep adding extra test persons until the desired effect was achieved. That’s cheating statistically.’

WEIRD

In order to avoid such pitfalls, Hasselman and his colleagues went to work rigorously. Each of the 28 studies was repeated at least 60 times, by labs in 36 different countries. This often brought the total number of test persons to more than five thousand – per experiment – and less WEIRD (‘Western, Educated, Industrialized, Rich, & Democratic’) than is generally the case. Hasselman: ‘I think it is without a doubt the largest repeat study ever carried out – at least with human test persons.’

The research protocols were published beforehand too (after they had been submitted to the original researchers for any adjustments deemed necessary). This meant that the procedure, research materials and analyses were all recorded. In order to ensure even more transparency, Hasselman and his team made all the data collected and analyses freely available, in an online database designed by the psychologist from Nijmegen.

‘Social psychology is studying itself, and that’s a good thing’

Thanks to the dozens of repeats, the researchers were also able to exclude other explanations if a certain connection was no longer shown, Hasselman explains. ‘In the past, the original research leaders pointed to ‘special circumstances’ as the reason for something having worked in their studies. Circumstances such as cultural differences or specific stimulus material. We were now able to exclude those hidden influencers.’

Mastodon

Hasselman doesn’t view the results as a confirmation of the fact that there is still a lot wrong in social sciences. ‘On the contrary! Social psychology is studying itself, and that’s a good thing. There are now far more journals accepting the replica studies than, let’s say, five years ago. At that time, that never happened.’

Many young scientists view the old guard with mixed feelings, according to Hasselman. ‘ ‘What were you doing all that time?’ they ask.’ However, he doesn’t believe that the mastodons should be burnt at the stake. ‘Where does that get you? Unless of course someone actually committed fraud, but you can’t directly prove that with our study. It’s better to focus on the new generation.’

Neuroscience

Eric Maris, too, is optimistic about the future. Maris is an associate professor at the Donders Institute, as well as an expert in the field of statistics and methods in behavioural and neuroscience. ‘Large-scale replication studies like this are still a way of catching up. After this, studies will be repeated much faster,’ he expects. ‘These days, there is already much more focus on statistical power – drawing conclusions based on sufficient test persons.’

The latter is a good step, Maris emphasises, but he believes that it’s even more important that researchers start documenting their research data and analyses much more efficiently. ‘That should really happen more often, certainly in neuroscience.’

At present, researchers still have too great a possibility of analysing data according to personal insight, he says, without anyone looking to see whether alternative calculations might lead to other conclusions. ‘It would be best if scientists were to publish all their calculations in an online database, together with the data. Then they themselves, or others, can subsequently check in a new group of test persons whether the results hold up.’ He hopes that research funding organisations such as NWO will exert more pressure to achieve this. ‘It’s already the standard in large genetics studies.’